We, the humans of this planet, now share this planet with an intelligence too great to understand. We are the second smartest thing on this planet and forever will be until we destroy ourselves or are destroyed by It, whatever shape It may take.

For those of you who haven’t closely followed AI, you might not have heard that a computer has beaten a human grandmaster at an ancient Chinese boardgame called Go. Even if you follow AI, you might not know why this is meaningful since Deep Blue beat the reigning chess champion Garry Kasparov long ago in 1997. And yet here we are. Not much has changed.

But today, everything has changed.

There is something fundamentally different about this victory and the approach to AI that achieved this victory that is quite astounding. So astounding, in fact, that no one really understands how or why it happened. And that, my fellow humans, is the terrible portent of things to come.

I will attempt to illustrate why this is meaningful, which may be a bit difficult because superintelligence is inherently beyond comprehension by any human. To bolster my argument, I will make a bold prediction on this day, March 12, 2016. Lee Sedol will lose his next 2 games in the tournament against AlphaGo. Not only that, but no human will beat the best computer for the rest of time. This is the new normal. Go, as a game, will continue, but computers will be ranked differently than humans. Perhaps someday, a human, through diligently studying many computer games of Go will be able to beat the lowest, worst AI— today’s AlphaGo. But AlphaGo in 2017 will be stronger than today, and stronger yet in 2018. We don’t know the limit yet. Perhaps we will never know the limit of how strong Go AI can be.

Once upon a time, you could race a car against a human and get a fair race. Or a car against a horse. Now, we just know the car is faster. And now, we just know that machines are smarter. But there is one difference between the machine being smarter and the machine being faster. We know how much faster the machine is than we are. We don’t know and can never know how much smarter the machine is than we are.

If that is difficult to comprehend, let me try to illustrate with an analogy. Have you seen the movie Edge of Tomorrow? It stars Tom Cruise as a solider with the ability to go back in time to defeat an alien invasion. He uses this ability to play out a save/restore kind of video game where he avoids all of the bad outcomes, no matter how unlikely. In essence, his superpower is a kind of time travel. He knows exactly what the alien is going to do before it does it.

An AI does a similar thing. Except instead of playing it out in real-life, it plays it out in its “mind”. It replays millions and billions of different scenarios of Tom Cruise winning and losing after making one tiny decision and then sorts them into the win or lose column. After adding up all of the scenarios, it knows exactly that picking up the gun has a 50% chance of winning, but not picking up the gun has a 70% chance of winning. That may seem counterintuitive to a soldier to not pick up the gun, but the AI does not care. It just knows that it has a better chance of winning if it doesn’t pick up the gun.

From there it starts to build an insurmountable lead. You, as the opponent fighting the AI that can rewind time, must choose the exact decision which will be the worst of the AI— which the AI has already calculated as in its favor at 70%. If you fail to do so, you are helping the AI. The AI’s percentage goes up. Because you made a mistake. You deviated from the optimal path. So, now the AI can decide between two choices that guarantee either an 80% or 90% win probability. At some point, the computer knows all of the outcomes. In chess, the computer would call out something like checkmate in 23 moves. If you play the next move optimally, the computer will state checkmate in 22 moves. If you make a huge mistake, the computer may say checkmate in 5 moves. But once you’ve reached that state of certainty, there is no turning back. Everything is already known by the computer, if not by you.

This ability is a terribly oppressive way to win a game. How do you beat a game where your opponent has the ability to rewind the future?

Now, here’s where I convince you that AlphaGo is too smart for any human to ever beat again. You remember in the Princess Bride where Westley surprises the Spaniard in the duel by saying, “Well, I’m not left-handed, either!” This is that moment! Savor it.

The method I described above is precisely how, until today, humans have beaten every Go AI. As it turns out, in the game of Go, there are just too many decisions to make in a reasonable amount of time. So, the computer does not have time to compute all of the possibilities. That’s right, the time-rewinding ability of Tom Cruise was strong, but not strong enough to overcome the combinatorial explosion of choices available in Go.

That’s right. The rewind super power is not good enough. Every Go program that has used it has failed to beat the best humans. So, how does AlphaGo win? Simple. It doesn’t use that super power.

Instead, AlphaGo has a superpower that is orders of magnitude better than what Tom Cruise had in Edge of Tomorrow. Think about that for a moment. Imagine facing an adversary which even if you had the rewind-time super power would beat you as easily as someone beat a normal person without that super power. We cannot begin to comprehend an intelligence where we could rewind time so many times and still not be able to beat it. We cannot comprehend how to beat it or even whether we are winning or not.

One thing that humans do when trying to win a game is to try and score more points in the game. This is true in Go as it is in football. But the computer doesn’t need that crutch. It only needs to win the game by a single point. If it has a choice to make a decision that will lose points, but improve its chances of winning in the end, then it will simply lose points. To make a football comparison, imagine an AI that would give up field goals and touchdowns intentionally sometimes, but would win the game by a small margin a larger percentage of the time. How would you beat such an AI? You don’t know if you’re scoring a touchdown because you are stronger than the AI or if the AI is so much stronger that it is simply letting you because doing so improves its total odds of winning the game but at a smaller margin of victory. You can’t comprehend it. You merely see the outcome that you’ve lost despite playing a game that would seem to be free of human mistakes.

AlphaGo’s superpower is truly amazing because it doesn’t do the time-rewind thing. It does something similar to what humans do. It recognizes patterns by using a neural net. In other words, it has a kind of brain that is different than how current computers work. And like a brain, we don’t really know what’s going on inside. This is very unlike a computer chess AI which can tell us what it’s “thinking”. That is, you can watch all of the replays of Tom Cruise trying out different things and examine why it seems to think that particular choice is the best choice.

AlphaGo picks what it feels is the best choice. Just like what humans do. Except AlphaGo can train much faster with many more games than any human. Humans train by learning games, too. But humans hinder themselves by framing those games in ideas and concepts such as sente (a kind of tempo), thickness (a kind of defensive wall), and other very human-like concepts in order to form common building blocks and to divide up the game to prevent it from being too overwhelming. AlphaGo needs no such crutch. In fact, it doesn’t even understand any of the human concepts of sente, thickness, or whatever. Humans who look at the game afterwards may comment on those constructs, just as scientists can mathematically describe physical phenomenon with formulas and equations. But that is a description after the fact. It is not, in any way, how AlphaGo “thinks” of making its moves.

The expert commentators on the games have gotten chills from watching AlphaGo play Lee Sedol. It’s because the computer makes moves that defy human understanding of the game. The human framing of breaking things down into Go “concepts” is alien to AlphaGo and so its results are alien to us. It is not constrained by those human concepts that have evolved over 3000 years. It goes beyond that into superhuman intelligence territory. I envy those Go experts since they truly understand what it’s like to face a superintelligence. They have described it as both terrifying and exciting.

Today, we are all Lee Sedol, the second smartest things on this planet. The smartest things are so smart, we can’t even comprehend them. And so, today, we shall usher in this new era of computer superintelligence which is both terrifying and exciting. May our future robotic overlords have mercy on our tiny flawed human minds.

https://gogameguru.com/alphago-shows-true-strength-3rd-victory-lee-sedol/

2020-10-07 Update:

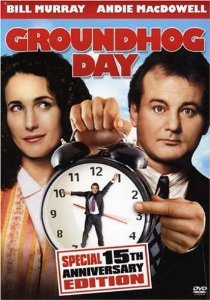

Watch this video about the Lee Sedol vs. AlphaGo match to get a sense of the human tension and stakes of this game to give some context for those of you who don’t understand enough about Go to understand how a large population of the earth consider it to be a game that reflects our human intelligence, spirit, and creativity.

It was deeply unsettling to me when AlphaGo beat Lee Sedol, and this movie dramatizes why I felt that way. Watch it even if don’t understand anything about Go. Watch especially if you don’t. You’ll understand more about Go and about how people have found a uniquely human spirit in Go. And you’ll be relieved that a machine cannot entirely steal that spirit from us human beings.